1. Introduction

2. Methodological foundations of PINNs for forward and inverse PDE problems

2.1. Solving forward PDE problems using PINNs

2.2. Solving inverse PDE problems using PINNs

3. Data-driven methods integrating physical constraints

3.1. Inference of V(x) at boundary points

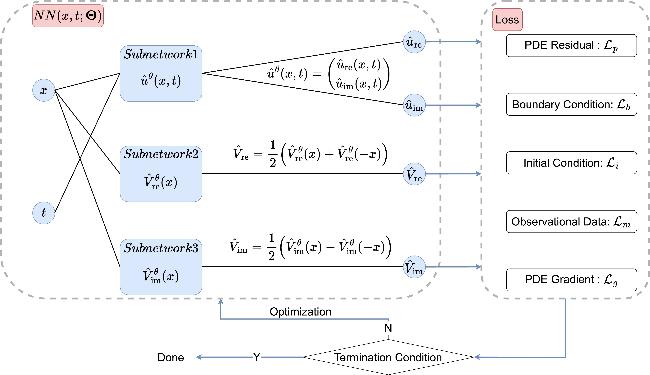

3.2. PPTS-PINNs

Figure 1. PPTS-PINNs: the left block represents a neural network parameterized by $\Theta$, while the right block denotes the loss function components. |

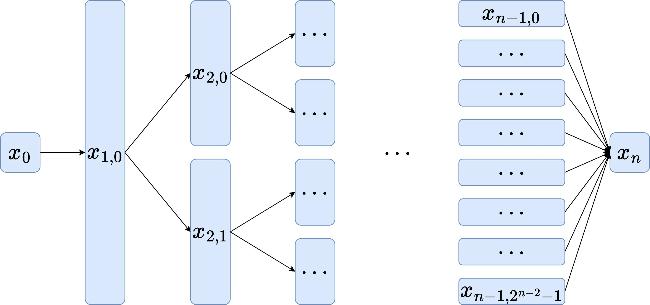

Figure 2. Binary structured neural network. |

4. Numerical results

4.1. Two classes of NLSEs

4.2. Inversion results with exact data

Table 1. Summary of hyperparameters and sampling sizes for PPTS-PINNs applied to NLSE1 and NLSE2. |

| Equation | Hidden layers | Width | $\left|{{ \mathcal T }}_{p}\right|$ | $\left|{{ \mathcal T }}_{b}^{l}\cup {{ \mathcal T }}_{b}^{r}\right|$ | $\left|{{ \mathcal T }}_{i}\right|$ | $\left|{{ \mathcal T }}_{m}\right|$ | ||||

|---|---|---|---|---|---|---|---|---|---|---|

| ${\hat{u}}^{\theta }$ | ${\hat{V}}_{{\rm{re}}}^{\theta }$ | ${\hat{V}}_{{\rm{im}}}^{\theta }$ | ${\hat{u}}^{\theta }$ | ${\hat{V}}_{{\rm{re}}}^{\theta }$ | ${\hat{V}}_{{\rm{im}}}^{\theta }$ | |||||

| NLSE1 | 4 | 4 | 4 | 16 | 16 | 16 | 860 | 400 | 400 | 860 |

| NLSE2 | 3 | 3 | 3 | 16 | 16 | 16 | 860 | 400 | 400 | 860 |

Table 2. Comparison of computational times for PPTS-PINNs and mPINNs, measured separately on CPU and GPU, along with parameter counts and training epochs. |

| Equation | Method | Parameters | Epochs | Time (s) [CPU/GPU] |

|---|---|---|---|---|

| NLSE1 | PPTS-PINNs | 2628 | 20 000 | 531/1163 |

| mPINNs | 7396 | 20 000 | 218/347 | |

| NLSE2 | PPTS-PINNs | 1812 | 20 000 | 592/1350 |

| mPINNs | 5044 | 20 000 | 259/398 |

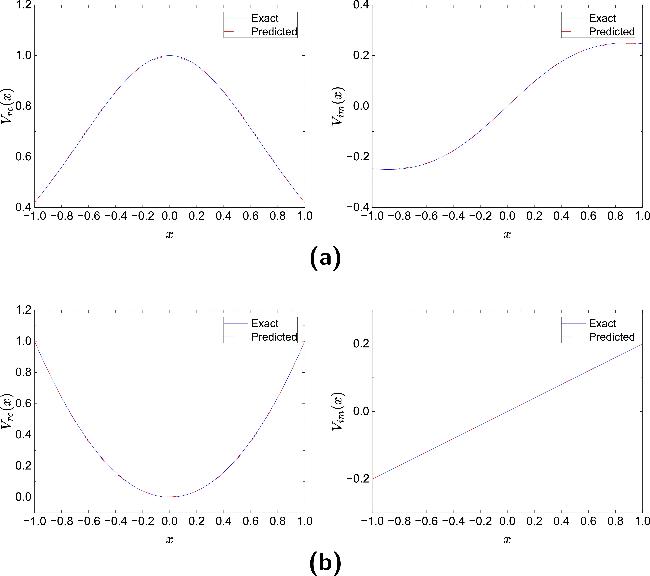

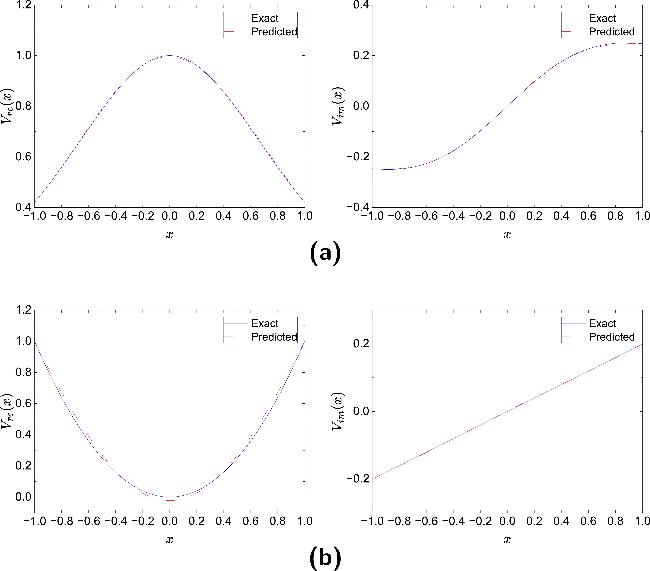

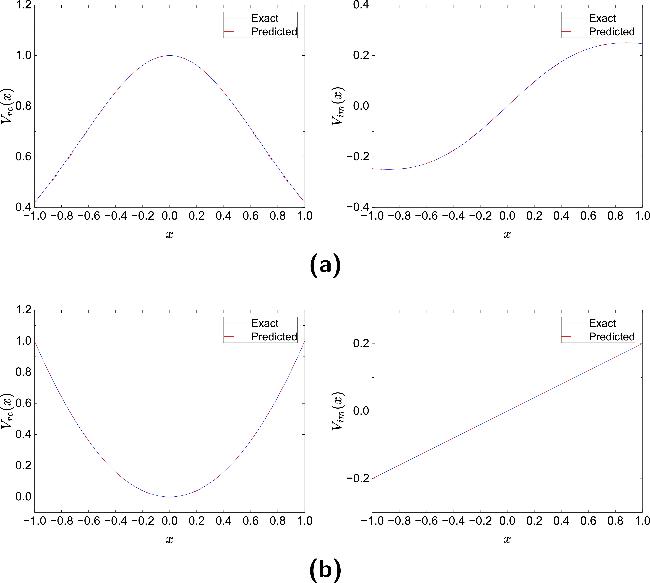

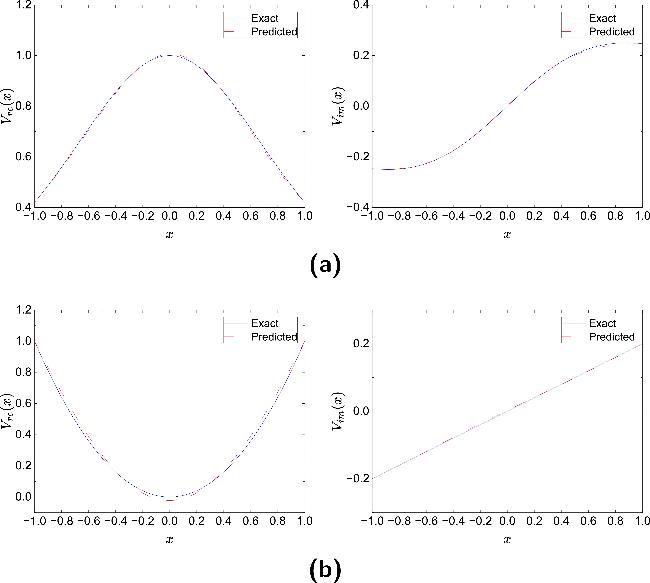

Figure 3. Comparison between the potential $\hat{V}(x)$ reconstructed by PPTS-PINNs and the exact potential V(x) in NLSE1 (a) and NLSE2 (b). |

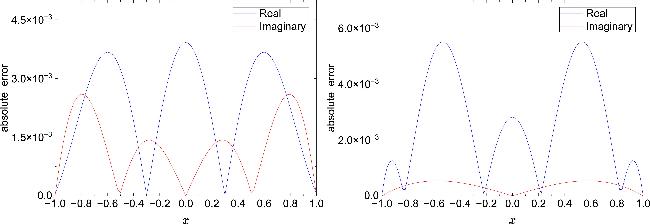

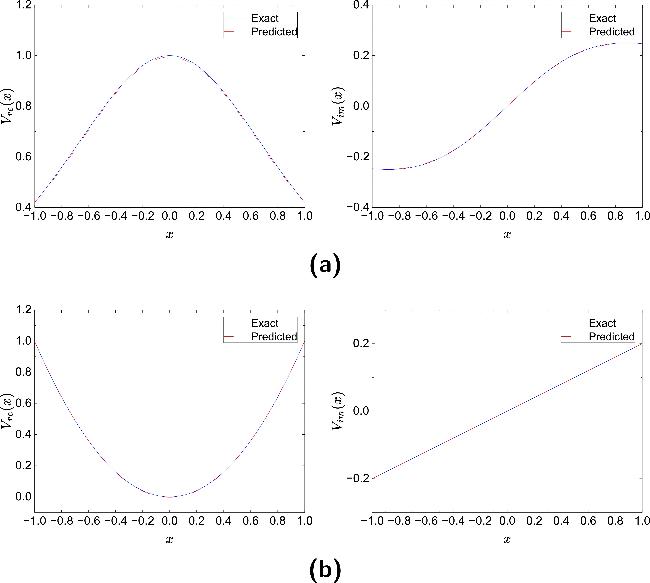

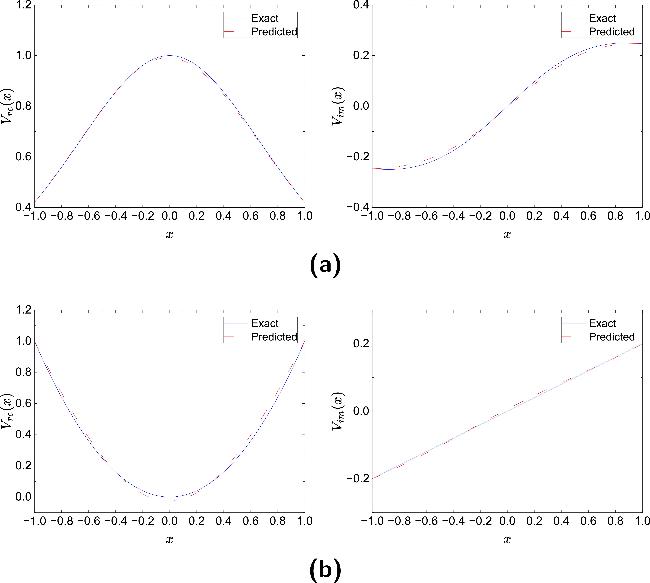

Figure 4. Absolute errors of the potential reconstructed by PPTS-PINNs in NLSE1 (left) and NLSE2 (right). |

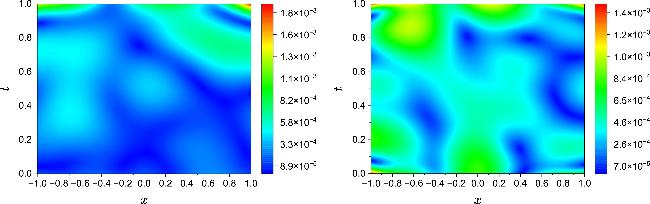

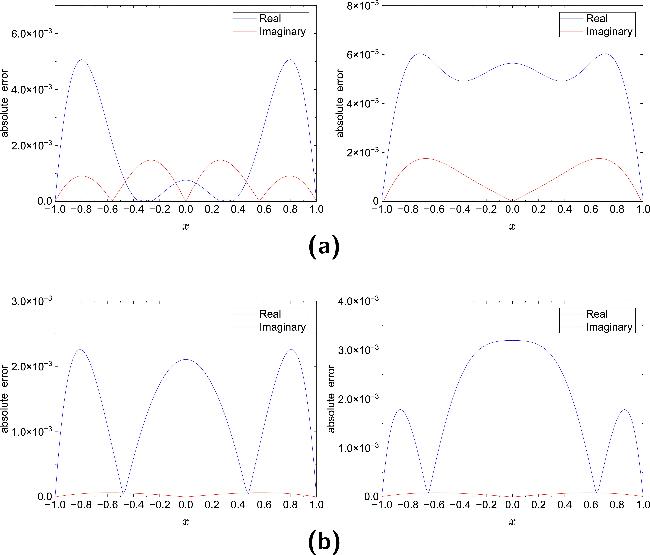

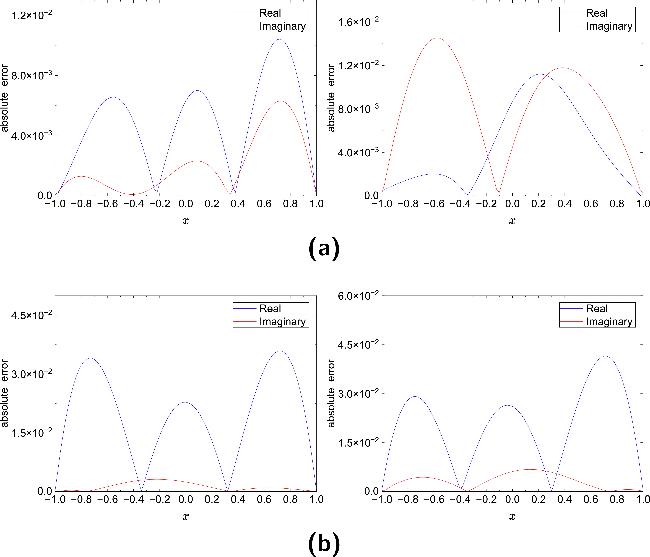

Figure 5. Absolute error distributions of the solution $\hat{u}(x,t)$ reconstructed by PPTS-PINNs with respect to the exact solution u(x, t) for NLSE1 (left) and NLSE2 (right). |

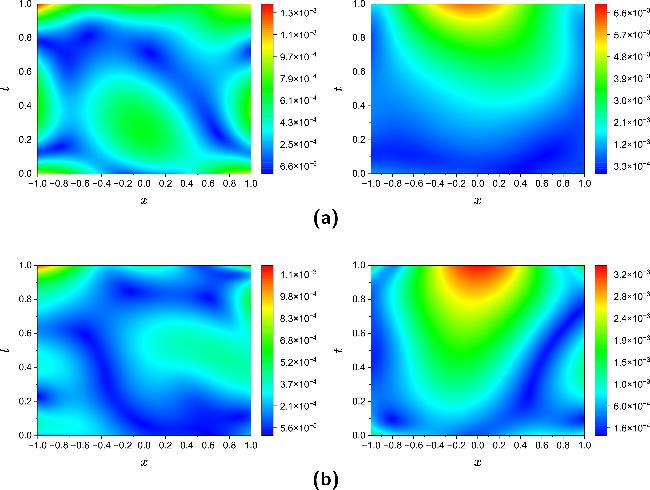

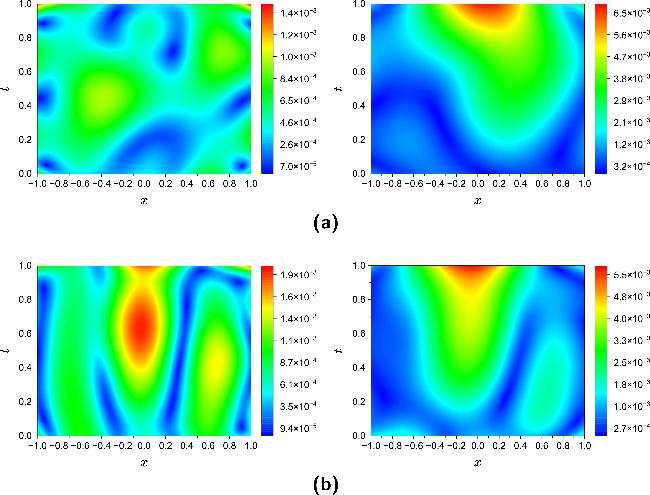

Figure 6. Comparison between the potential $\hat{V}(x)$ reconstructed by mPINNs and the exact potential V(x) in NLSE1 (a) and NLSE2 (b). |

Figure 7. Absolute errors of the potential reconstructed by mPINNs in NLSE1 (left) and NLSE2 (right). |

Figure 8. Absolute error distributions of the solution $\hat{u}(x,t)$ reconstructed by mPINNs with respect to the exact solution u(x, t) for NLSE1 (left) and NLSE2 (right). |

Table 3. Error metrics for the real potential ${V}_{{\rm{re}}}$, imaginary potential ${V}_{{\rm{im}}}$, and solution u(x, t) using PPTS-PINNs and mPINNs. |

| Equation | Method | ${V}_{{\rm{re}}}(x)$ | ${V}_{{\rm{im}}}(x)$ | u(x, t) | |||

|---|---|---|---|---|---|---|---|

| $\ell$∞ | ${\ell }_{2}^{{\rm{r}}{\rm{e}}{\rm{l}}}$ | $\ell$∞ | ${\ell }_{2}^{{\rm{r}}{\rm{e}}{\rm{l}}}$ | $\ell$∞ | ${\ell }_{2}^{{\rm{r}}{\rm{e}}{\rm{l}}}$ | ||

| NLSE1 | PPTS-PINNs | 3.92 × 10-3 | 3.30 × 10-3 | 2.60 × 10-3 | 7.63 × 10-3 | 1.89 × 10-3 | 3.88 × 10-4 |

| mPINNs | 5.22 × 10-3 | 4.12 × 10-3 | 4.25 × 10-3 | 1.04 × 10-2 | 2.25 × 10-3 | 5.34 × 10-4 | |

| NLSE2 | PPTS-PINNs | 5.51 × 10-3 | 6.88 × 10-3 | 5.37 × 10-4 | 3.29 × 10-3 | 1.46 × 10-3 | 5.72 × 10-4 |

| mPINNs | 3.48 × 10-2 | 4.75 × 10-2 | 1.81 × 10-3 | 9.09 × 10-3 | 1.60 × 10-3 | 9.93 × 10-4 | |

4.3. Inverse results with noisy data

Table 4. Summary of hyperparameters, sampling sizes for PPTS-PINNs applied to NLSE1 and NLSE2 under varying noise levels (1% and 5%). |

| Equation | Hidden layers | Width | $\left|{{ \mathcal T }}_{p}\right|$ | $\left|{{ \mathcal T }}_{b}^{l}\cup {{ \mathcal T }}_{b}^{r}\right|$ | $\left|{{ \mathcal T }}_{i}\right|$ | $\left|{{ \mathcal T }}_{m}\right|$ | $\left|{{ \mathcal T }}_{g}\right|$ | ||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| ${\hat{u}}^{\theta }$ | ${\hat{V}}_{{\rm{re}}}^{\theta }$ | ${\hat{V}}_{{\rm{im}}}^{\theta }$ | ${\hat{u}}^{\theta }$ | ${\hat{V}}_{{\rm{re}}}^{\theta }$ | ${\hat{V}}_{{\rm{im}}}^{\theta }$ | ||||||

| NLSE1 (1% noise) | 4 | 4 | 4 | 32 | 32 | 32 | 3440 | 800 | 800 | 860 | 3440 |

| NLSE2 (1% noise) | 3 | 3 | 3 | 32 | 32 | 32 | 3440 | 800 | 800 | 860 | 3440 |

| NLSE1 (5% noise) | 4 | 4 | 4 | 64 | 32 | 32 | 7740 | 1600 | 1600 | 860 | 7740 |

| NLSE2 (5% noise) | 3 | 3 | 3 | 64 | 32 | 32 | 7740 | 1600 | 1600 | 860 | 7740 |

Table 5. Comparison of computational times for PPTS-PINNs and mPINNs under varying noise levels (1% and 5%), along with parameter counts and training epochs. |

| Equation | Method | Parameters | Epochs | Time (s) |

|---|---|---|---|---|

| NLSE1 (1% noise) | PPTS-PINNs | 9860 | 30 000 | 3750 |

| mPINNs | 28 612 | 30 000 | 582 | |

| NLSE2 (1% noise) | PPTS-PINNs | 6692 | 30 000 | 5225 |

| mPINNs | 19 300 | 30 000 | 678 | |

| NLSE1 (5% noise) | PPTS-PINNs | 19 332 | 30 000 | 3762 |

| mPINNs | 50 436 | 30 000 | 749 | |

| NLSE2 (5% noise) | PPTS-PINNs | 13 060 | 30 000 | 5579 |

| mPINNs | 33 924 | 30 000 | 975 |

Figure 9. Comparison between the potential $\hat{V}(x)$ reconstructed by PPTS-PINNs and the exact potential V(x) in NLSE1 (a) and NLSE2 (b) under 1% noise level. |

Figure 10. Comparison between the potential $\hat{V}(x)$ reconstructed by PPTS-PINNs and the exact potential V(x) in NLSE1 (a) and NLSE2 (b) under 5% noise level. |

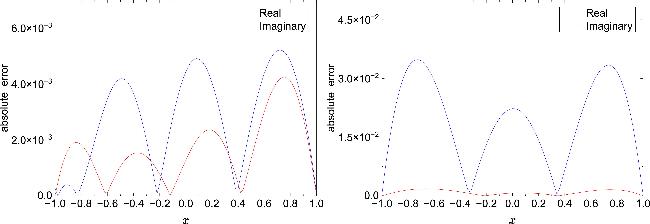

Figure 11. Absolute error distributions of the potential reconstructed by PPTS-PINNs in NLSE1 (a) and NLSE2 (b) under different noise levels. Left: 1% noise; right: 5% noise. |

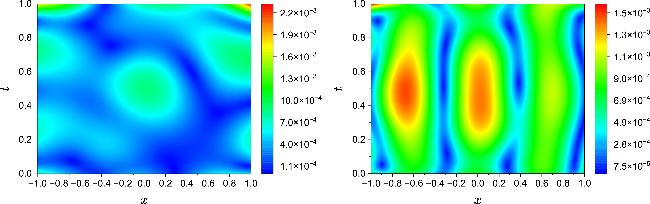

Figure 12. Absolute error distributions of the solution $\hat{u}(x,t)$ reconstructed by PPTS-PINNs with respect to the exact solution u(x, t) in NLSE1 (a) and NLSE2 (b) under different noise levels. Left: 1% noise; right: 5% noise. |

Figure 13. Comparison between the potential $\hat{V}(x)$ reconstructed by mPINNs and the exact potential V(x) in NLSE1 (a) and NLSE2 (b) under 1% noise level. |

Figure 14. Comparison between the potential $\hat{V}(x)$ reconstructed by mPINNs and the exact potential V(x) in NLSE1 (a) and NLSE2 (b) under 5% noise level. |

Figure 15. Absolute error distributions of the potential reconstructed by mPINNs in NLSE1 (a) and NLSE2 (b) under different noise levels. Left: 1% noise; right: 5% noise. |

Figure 16. Absolute error distributions of the solution $\hat{u}(x,t)$ reconstructed by mPINNs with respect to the exact solution u(x, t) in NLSE1 (a) and NLSE2 (b) under different noise levels. Left: 1% noise; right: 5% noise. |

Table 6. Error metrics for the real potential ${V}_{{\rm{re}}}$, imaginary potential ${V}_{{\rm{im}}}$, and solution u(x, t) under varying noise levels (1% and 5%), using PPTS-PINNs and mPINNs. |

| Equation | Method | ${V}_{{\rm{re}}}(x)$ | ${V}_{{\rm{im}}}(x)$ | u(x, t) | |||

|---|---|---|---|---|---|---|---|

| $\ell$∞ | ${\ell }_{2}^{{\rm{r}}{\rm{e}}{\rm{l}}}$ | $\ell$∞ | ${\ell }_{2}^{{\rm{r}}{\rm{e}}{\rm{l}}}$ | $\ell$∞ | ${\ell }_{2}^{{\rm{r}}{\rm{e}}{\rm{l}}}$ | ||

| NLSE1 (1% noise) | PPTS-PINNs | 5.07 × 10-3 | 3.35 × 10-3 | 1.48 × 10-3 | 4.61 × 10-3 | 1.39 × 10-3 | 4.67 × 10-4 |

| mPINNs | 1.04 × 10-2 | 7.24 × 10-3 | 6.31 × 10-3 | 1.42 × 10-2 | 1.48 × 10-3 | 5.96 × 10-4 | |

| NLSE2 (1% noise) | PPTS-PINNs | 2.26 × 10-3 | 3.41 × 10-3 | 6.63 × 10-5 | 4.06 × 10-4 | 1.14 × 10-3 | 3.08 × 10-4 |

| mPINNs | 3.59 × 10-2 | 4.89 × 10-2 | 3.13 × 10-3 | 1.40 × 10-2 | 1.94 × 10-3 | 1.14 × 10-3 | |

| NLSE1 (5% noise) | PPTS-PINNs | 6.03 × 10-3 | 6.55 × 10-3 | 1.75 × 10-3 | 6.01 × 10-3 | 6.29 × 10-3 | 2.53 × 10-3 |

| mPINNs | 1.12 × 10-2 | 7.52 × 10-3 | 1.45 × 10-2 | 4.83 × 10-2 | 6.68 × 10-3 | 2.74 × 10-3 | |

| NLSE2 (5% noise) | PPTS-PINNs | 3.20 × 10-3 | 4.77 × 10-3 | 8.63 × 10-5 | 5.34 × 10-4 | 3.38 × 10-3 | 1.65 × 10-3 |

| mPINNs | 4.15 × 10-2 | 5.16 × 10-2 | 6.79 × 10-3 | 3.28 × 10-2 | 5.76 × 10-3 | 2.54 × 10-3 | |

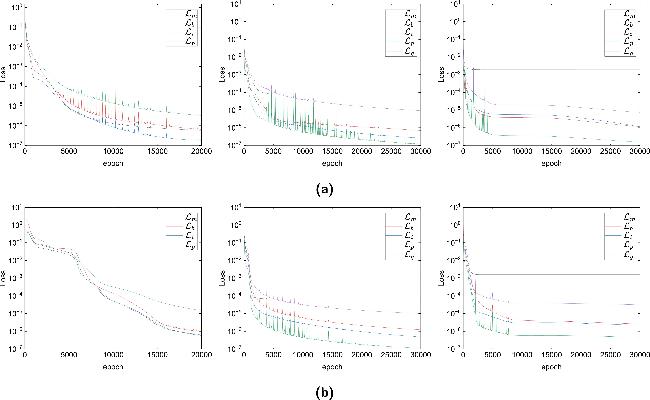

Figure 17. Loss function curves of the PPTS-PINNs method during training for NLSE1 (a) and NLSE2 (b) under different noise levels. Left: exact data (no noise); middle: 1% noise; right: 5% noise. The curves show the evolution of individual loss components over epochs. |

Table 7. Final values of individual loss components of the PPTS-PINNs method for NLSE1 and NLSE2 under varying noise levels. Missing entries are indicated by '——'. |

| Equation | ${{ \mathcal L }}_{p}$ | ${{ \mathcal L }}_{b}$ | ${{ \mathcal L }}_{i}$ | ${{ \mathcal L }}_{m}$ | ${{ \mathcal L }}_{g}$ | ${ \mathcal L }$ |

|---|---|---|---|---|---|---|

| NLSE1 | 3.29 × 10-6 | 6.44 × 10-7 | 1.79 × 10-7 | 5.75 × 10-7 | —— | 4.69 × 10-6 |

| NLSE2 | 1.54 × 10-5 | 1.39 × 10-6 | 6.20 × 10-7 | 7.68 × 10-7 | —— | 1.82 × 10-5 |

| NLSE1 (1% noise) | 1.18 × 10-7 | 6.95 × 10-7 | 2.58 × 10-7 | 7.78 × 10-5 | 9.27 × 10-6 | 8.81 × 10-5 |

| NLSE2 (1% noise) | 1.12 × 10-7 | 1.22 × 10-6 | 4.83 × 10-7 | 6.40 × 10-5 | 1.01 × 10-5 | 7.59 × 10-5 |

| NLSE1 (5% noise) | 1.55 × 10-7 | 1.16 × 10-6 | 1.14 × 10-6 | 1.93 × 10-3 | 7.35 × 10-6 | 1.94 × 10-3 |

| NLSE2 (5% noise) | 4.61 × 10-7 | 2.57 × 10-6 | 2.20 × 10-6 | 1.58 × 10-3 | 3.16 × 10-5 | 1.62 × 10-3 |