1. Introduction

2. Data simulation and preprocessing

2.1. CMB temperature and lensing potential

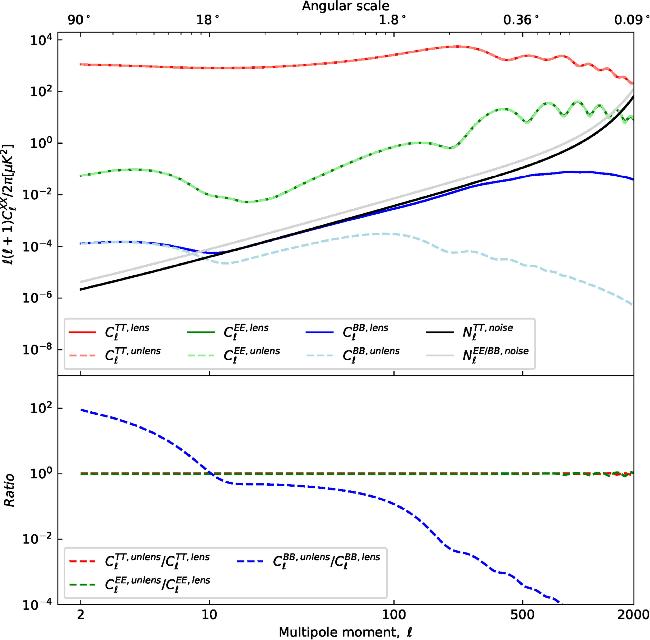

Figure 1. CMB XX angular power spectrum, X ∈ {T, E, B}. Top panel: the red solid line represents the lensed TT spectrum, while the light red dashed line corresponds to the unlensed TT spectrum. The green solid line denotes the EE spectrum under the influence of lensing, whereas the light green dashed line shows the EE spectrum without lensing effects. The blue solid line indicates the lensing-modified BB spectrum, and the light blue dashed line depicts the BB spectrum in the absence of lensing effects. The parameters for generating the temperature angular power spectrum are As = 2.1 × 10-9 and r = 0.005. The black solid line displays the noise level of the temperature detectors, and the grey solid line represents that of the polarization detectors. Bottom panel: this illustrates the relative differences between these highly similar spectra. The red dashed line marks the ratio of the unlensed TT spectrum to the lensed TT spectrum; the green dashed line indicates the ratio of the unlensed EE spectrum to the lensed EE spectrum; and the blue dashed line shows the ratio of the unlensed BB spectrum to the lensed BB spectrum. |

2.2. CMB polarization

2.3. Noise simulation

2.4. Data preprocessing

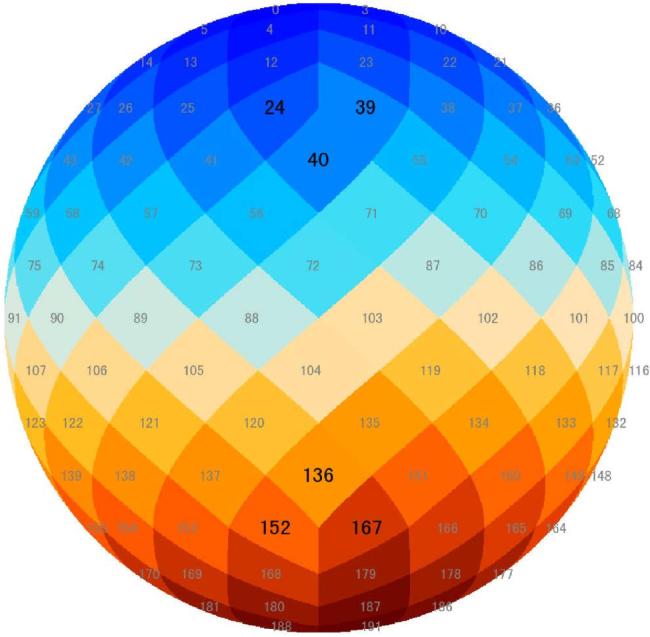

Figure 2. A half-orthogonal view projection of the sky map. Gray numbers sequentially label pixels with four neighbors, while larger black numbers highlight those with only three. |

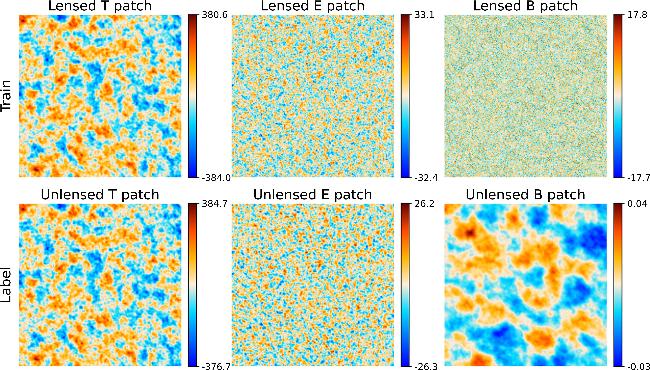

Figure 3. The T, E, and B sky map patches. Each patch has a size of 214.86deg2. The center of the sky patches is (l, b) = (101.25°, 19.471°), where l and b are the Galactic longitude and Galactic latitude, respectively. From left to right, the sky patches are the T, E, and B maps, respectivly. The top row of patches displays sky map patches affected by lensing effects, including noise and instrumental artifacts, which are used to construct the training dataset. The second row presents the original, unlensed sky map patches, which serve as the basis for generating the label dataset. The unit is μK. |

3. QE delensing and UNet++ structure

3.1. QE delensing

3.2. UNet++ structure

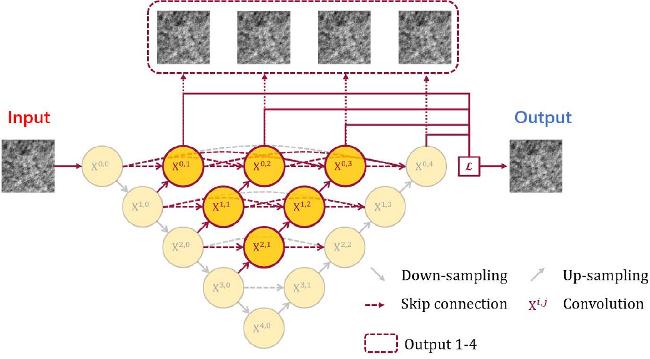

Figure 4. Unet++ network architecture. Each node in the graph represents a convolution block, downward arrows indicate down-sampling, upward arrows indicate up-sampling, dot arrows indicate skip connections, and the dot box indicates the four outputs. UNet++ combines UNets of different depths into a unified architecture. All substructures share the same encoder, but have their own decoders. Then skip connections are dropped, and every two neighboring nodes are connected with a short skip connection, enabling the deeper decoder to send supervisory signals to the shallower decoder. Finally, by connecting the decoders, a densely connected skip connection is generated so that the dense features propagate along the skip connection, resulting in more flexible feature fusion at the decoder nodes. Thus, each node in the UNet++ decoder combines multiscale features of the same resolution from all its preceding nodes from a horizontal perspective, and integrates multiscale features of different resolutions from its preceding nodes from a vertical perspective.This multiscale feature aggregation in UNet++ gradually synthesizes the segmentation, resulting in improved accuracy and fast convergence. |

3.3. Loss function

3.4. Training and testing

Table 1. Adjustment and setting of hyperparameters in the UNet++ architecture design. Prior values indicate that the optimum value is selected from the parameters of the preset value. |

| H-Param | Description | Prior values | Optimum |

|---|---|---|---|

| η | Learning rate | [10-3, 10-4, 10-5] | 10-4 |

| ω | Weight decay | [10-4, 10-5, 10-6] | 10-5 |

| nfilters | Filters | [16, 32, 64] | 32 |

| b | Batch size | [32, 64, 128] | 64 |

| Ω | Optimizer | [Adam, NAdam] | NAdam |

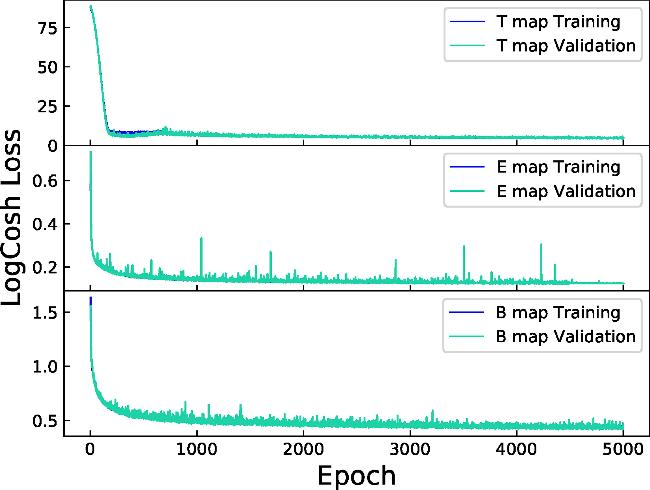

Figure 5. Loss function evolution per network over epochs. From top to bottom, the results of training for T, E, and B maps, respectively, are shown. The dark blue solid line indicates the training set loss function evolution, and the light blue solid line indicates the validation set loss function evolution. |

4. Results and discussion

4.1. Sky map analysis

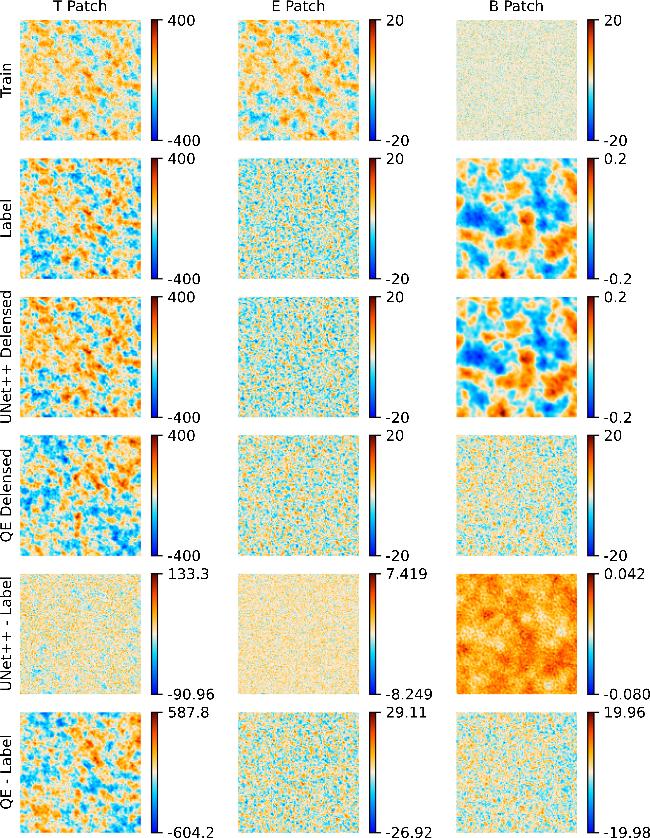

Figure 6. Comparison of training and predicted sky map patches. The image is arranged in columns corresponding to the three sky map patches T, E, and B in order. The columns are arranged from top to bottom, with the first row showing the sky map patch that has been lensed and is affected by noise and instrumental effects, the second row showing the original sky patch of the real sky image that is not affected by gravitational lensing effects, the third row showing the lens-removed prediction result of the UNet++ model after processing, the fourth row showing the lens-removed prediction result of the QE algorithm after processing, the fifth row showing the residual map of the UNet++ prediction result and the real image, and the sixth row showing the residual map of the QE algorithm prediction result and the real image. Similar to figure 3, the size of a sky patch is 214.86 deg2, with the center located at (l, b) = (78.75°, 0°). The unit is μK. |

Table 2. SSIM values between predicted map patches and ground truth labels. |

| ${{\rm{SSIM}}}_{(T,T)}$ | ${{\rm{SSIM}}}_{(E,E)}$ | ${{\rm{SSIM}}}_{(B,B)}$ | |

|---|---|---|---|

| Patch(Truth,QE) | 0.7667 | 0.7390 | 0.0282 |

| Patch(Truth,UNet++) | 0.9880 | 0.9760 | 0.9544 |

Table 3. PSNR values between predicted map patches and ground truth labels. The unit is dB. |

| PSNR(T,T) | PSNR(E,E) | PSNR(B,B) | |

|---|---|---|---|

| Patch(Truth,QE) | 21.14 | 19.25 | 14.48 |

| Patch(Truth,UNet++) | 37.70 | 38.76 | 37.87 |

4.2. Angular power spectrum analysis

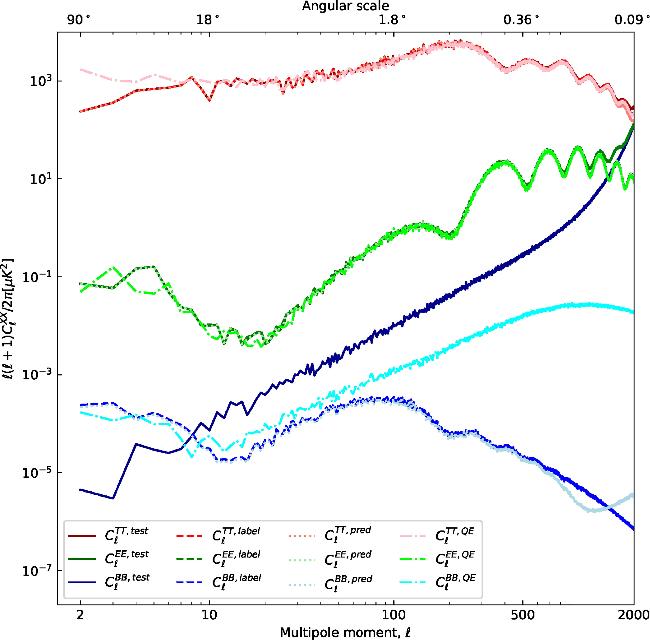

Figure 7. For the TT spectrum, the dark red solid line denotes the lensed power spectrum, the red dashed line shows the unlensed spectrum, the salmon dash-dot line corresponds to the spectrum delensed using UNet++, and the pink dotted line represents delensing via the QE algorithm. For the EE spectrum, the dark green solid line indicates the lensed EE power spectrum, the green dashed line shows the unlensed case, the light green dash-dot line represents delensing with UNet++, and the lime dotted line shows the delensed spectrum obtained by the QE method. For the BB spectrum, the dark blue solid line denotes the lensed BB power spectrum, the blue dashed line represents the unlensed primordial signal, the light blue dash-dot line corresponds to delensing by UNet++, and the cyan dotted line shows the result from QE-based delensing. The parameters for the temperature power spectrum are set to As = 2.1 × 10-9 and r = 0.005. |

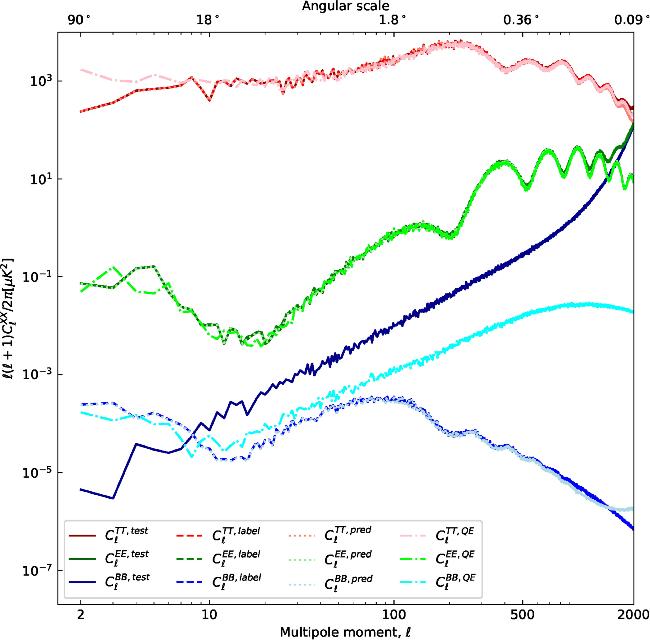

Figure 8. Angular power spectrum of the unlensed and predicted CMB TT, EE, and BB. The results of UNet++ are obtained by averaging over 30 rotational transformations, while the power spectra of the QE algorithm are calculated based on the full-sky map. The line color and line style in this diagram are consistent with figure 7. |